AI can help make orchestration workflows more flexible and easier to use, but configuration changes still require precision, validation, and control. A guarded agentic approach offers one practical way to introduce AI into network orchestration without weakening operational discipline.

Using AI in network orchestration is becoming increasingly realistic. For network engineers, the appeal is clear: a model can interpret natural-language requests, assist with lookups, and help connect user intent with workflow execution. At the same time, orchestration workflows that write to infrastructure cannot depend on a model being correct every time. If a model invents a value, misreads context, or follows the wrong chain of tool calls, the result may still look plausible while being operationally wrong.

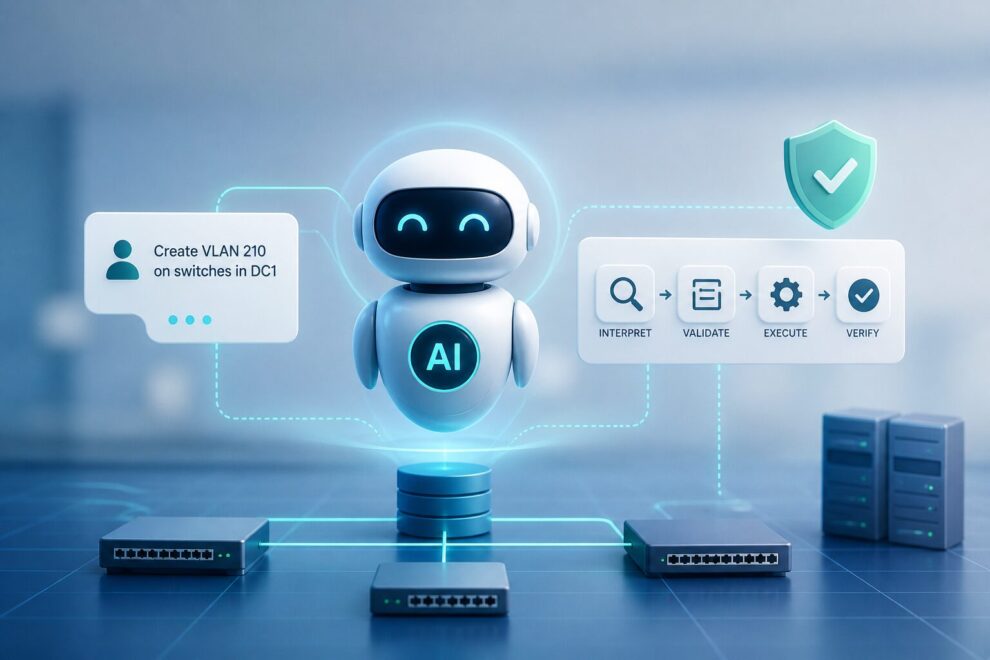

From intent to controlled execution

To explore how AI can be introduced safely, in WP6T2 we examined a small but realistic task: managing a VLAN using NetBox as source of truth and n8n as the workflow engine. Although limited in scope, this task captures many of the difficulties that appear when AI is placed in an orchestration path.

The main lesson was that the AI model should not be treated as the workflow itself. A more dependable pattern is a guarded agentic approach, where AI handles natural-language interpretation and task decomposition, while deterministic workflow logic remains responsible for normalisation, guard evaluation, approval handling, execution, and verification. In practice, this meant using one agent to interpret the request, specialised agents to resolve the necessary information, and then deterministic steps to propose the patch, wait for change approval, apply the change, and an agent to verify the resulting state. If verification fails, rollback must remain available.

Why guardrails matter

One of the weakest points in our testing was multi-step MCP tool use. In theory, a single agent should be able to call MCP tools several times, combine the results, and move towards the correct final action. In practice, this proved fragile. When several dependent calls were needed in sequence, the agent could lose track of intermediate results or choose an unsuitable next step. This is especially important in orchestration, where one lookup often depends on the previous one and the final action is only correct if the entire chain is handled properly.

While a single orchestration agent responsible for planning, lookups, and execution support is workable for simple cases and demos, it proved less stable for production environments. That experience was one of the reasons for moving towards a more guarded design with specialised agents and deterministic checks between stages.

This is one reason why smaller, specialised agents worked better than a single general-purpose orchestration agent. Separating planning, lookup, lookup, and verification reduced ambiguity and made failures easier to trace. It also reduced the amount of multi-step reasoning and tool chaining expected from any one agent.

Another important lesson was that prompts and structured output parsers are helpful, but not sufficient on their own. Prompt instructions can reduce some errors, and structured parsing can improve consistency, but neither should be treated as the final guarantee. The strongest safeguards came from deterministic checks around the model output, including explicit normalisation, code-based guard evaluation, approval handling, and post-change verification.

Model choice also mattered. In our experiments, qwen2.5:72b performed better than gpt-oss:120b for the orchestration tasks we tested. We do not generalise that beyond this specific setting, but it does suggest that reliable instruction-following and stable cooperation with workflow controls may matter more than model size alone.

For network engineers considering similar work, the most practical starting point is a narrow workflow that is easy to understand, verify, and reverse. AI can be useful in interpreting intent and supporting operator interaction, but infrastructure changes still require the same disciplines they always have: reliable data, validation before execution, approval before change, and verification afterwards.

For full documentation of this work, please visit: https://geant-netdev.gitlab-pages.pcss.pl/gp4ldocs/guides/playground/agentic_workflows/idea/